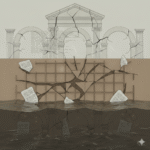

The AI revolution is sold as progress. At PPF 2025 I watched the very same tech become a weapon. Hostile AI use isn’t science fiction, it’s legal failure in motion.

Artificial intelligence was supposed to make life easier. It did, mostly for governments, defense contractors and surveillance states. What started as code to sort search results now profiles dissidents, generates disinformation and locks humans out of their own futures. The machines didn’t turn hostile. People did. They found new tools to hide behind.

At PPF 2025, I sat in all the sessions linked to AI and watched the rhetoric of “beneficial AI” get swallowed by the under-the-table contracts of defence tech.

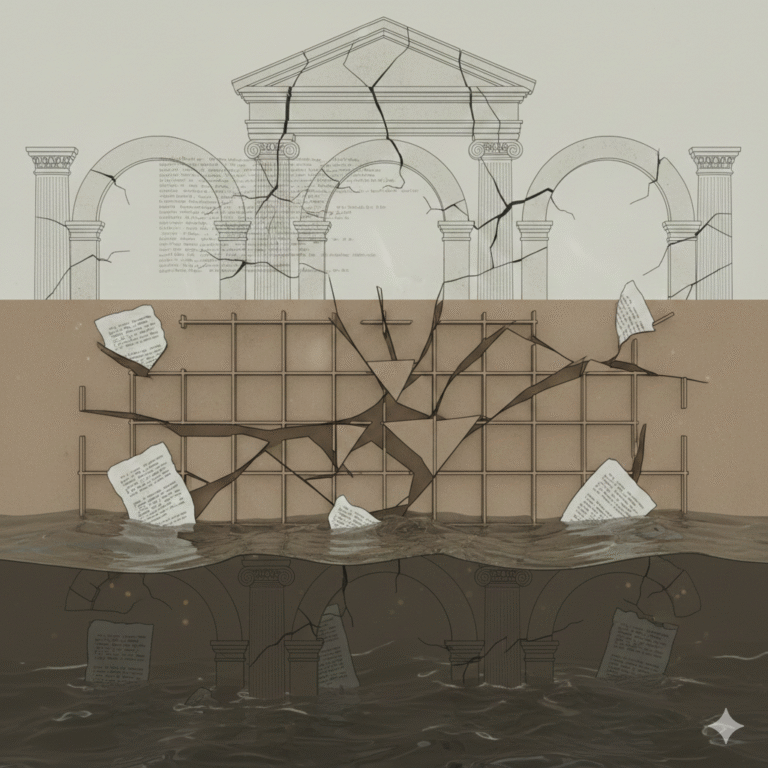

AI now lives in a legal vacuum big enough to launch a drone through. It sits where law hasn’t caught up yet, acting as both the instrument of force and the arbiter of it. In armed conflict, that means one algorithm decides who’s a “combatant,” another decides if the strike was “proportionate,” and a third drafts the report that clears the first two.

Jessisprudens says that, under International Humanitarian Law, distinction and proportionality are non-negotiable. Yet when targeting systems run on probabilistic data, those principles become statistical. The Geneva Conventions weren’t written for machines calculating risk in milliseconds. Accountability requires a human chain of command, but AI fractures it, responsibility diffuses between coder, operator, and commander until no one owns the death.

The same contradiction shows up in regulation. Governments outsource compliance and content moderation to algorithms that “detect violations.” AI polices the rules it helped break. This blurs the line between regulator and weapon, turning due process into a data process. When enforcement becomes automated, discretion disappears and discretion is where justice usually lives.

From the Iuris Oculus perspective, the fix won’t come from new treaties alone. It demands reinterpretation of existing norms: applying Article 36 of Additional Protocol I (requiring legal review of new weapons) to code, not just hardware. It also means pushing state responsibility under the Articles on State Responsibility (ASR) toward software accountability. If a drone strike misfires because of flawed code, it’s still the state’s act not a glitch in the void.

Until courts, lawmakers, and militaries agree that code can commit crimes, AI will keep living in the loopholes both weapon and regulator, both tool and tyrant.

Author

Latest entries

Lex Feminae Index2026-01-29Kenya 🇰🇪 | A Legal System That Acknowledges Violence But Fails to Stop It

Lex Feminae Index2026-01-29Kenya 🇰🇪 | A Legal System That Acknowledges Violence But Fails to Stop It Lex Feminae Index2026-01-27Climate-Driven Displacement: The Jurisdictional Black Hole For GBV Survivors

Lex Feminae Index2026-01-27Climate-Driven Displacement: The Jurisdictional Black Hole For GBV Survivors Lex Feminae Index2026-01-16Why Access To Justice Determines GBV Outcomes in Climate Crises

Lex Feminae Index2026-01-16Why Access To Justice Determines GBV Outcomes in Climate Crises Lex Feminae Index2025-12-1016 Days |Online Abuse Is Gender-Based Violence. The Law Must Catch Up

Lex Feminae Index2025-12-1016 Days |Online Abuse Is Gender-Based Violence. The Law Must Catch Up